Network motivations

An interdisciplinary algorithmic listening

By Alice Eldridge

This blog is intended as a scratch pad for discussions. In these opening posts Co-I Dr Paul Stapleton and I will share our motivations for instigating this network; network participants are also warmly invited to share relevant research or reflections, so please be warned that we may approach you for a contribution, and feel free to offer some words if there is a pertinent topic you would like to think through.

There is a broad mix of disciplines represented, and it may be that any two participants have very little in common. This is intentional. The network was motivated, in part, by recent personal experiences of working across research communities – computer science, interactive music, soundscape ecology and most recently digital humanities. I have been struck – both confused and inspired – by the differences in attitudes, concerns, approach and ambitions of different disciplines with respect to the use of technology in general, and machine listening in particular, in research and practice. In this first post I will outline some of the personal motivations for the network, and consider some of the ways in which we might fruitfully ‘humanise’ algorithmic listening.

Exosomatic listening organs: The promise of new sonic prostheses

For celebrants of new technology, machine listening is as a key enabler, holding promise of unlocking new ways to understand and interact with the world. Decades of work in speech processing and music information retrieval have produced machine listening algorithms capable of accurately recognising aspects of human speech, or music such as genre, instrument melodic or rhythmic features. Current research is exploring other ways that machine listening could open up new ways of understanding and engaging with our lived environments across many other domains in research and industry: From digital humanities to conservation biology, industrial monitoring to audio archive management we are learning to listen with algorithms.

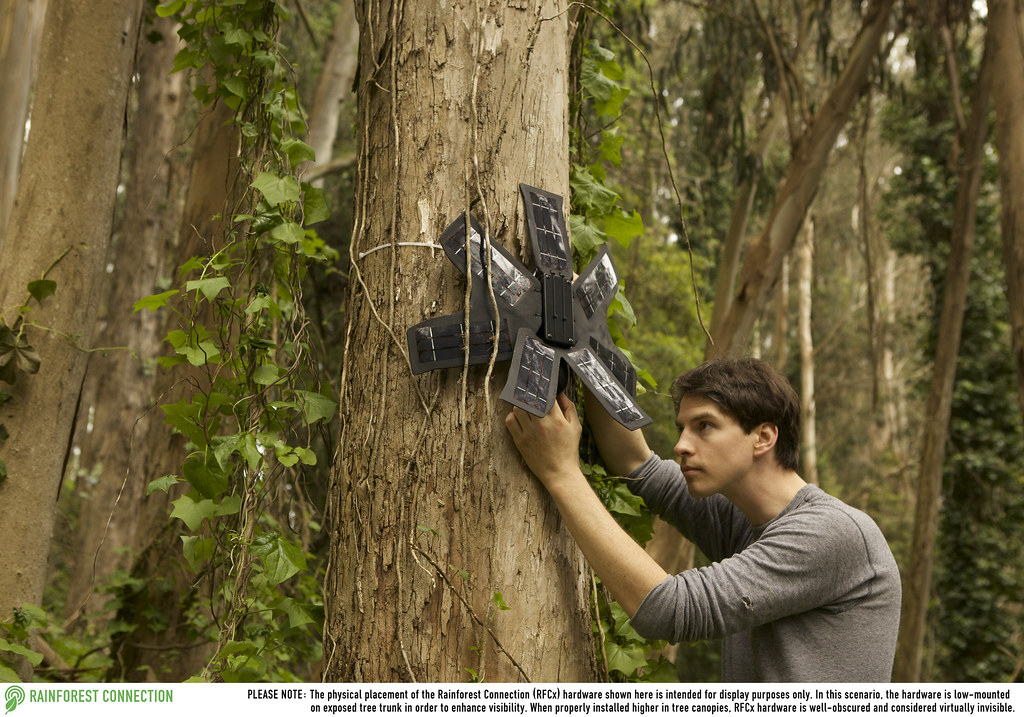

Rain Forest Connections’ upcycled mobile phones use automatic chain saw detection algorithms and text messaging to alert forest wardens of illegal logging in protected tropical forests

Rain Forest Connections’ upcycled mobile phones use automatic chain saw detection algorithms and text messaging to alert forest wardens of illegal logging in protected tropical forests

With ecologist and engineering colleagues I am working on the development of acoustic indices as a tool for rapid biodiversity assessment, and the development of low power hardware meshworks to create acoustic biodiversity monitoring systems. This work is driven by an urgent, global need for efficient and effective ways to monitor the planet’s critically endangered species, and to understand and evidence the impact of extractive industries on the fast disappearing pristine rainforests. In the race to develop new acoustic indices, fundamental oversights are being made regarding basic properties of digital audio recording which seriously compromise the effectiveness of this potentially transformative tool. As well as the need for more technical research, ethical considerations around the use of pervasive acoustic monitoring in the wild are yet to make it onto the agenda.

Humanising Algorithmic Listening might mean exploiting (rather than overlooking) the fundamental differences between machine and human listening in order to design meaningful high level algorithms in new application domains.

Humanising Algorithmic Listening might mean considering the rights of humans even where the wellbeing of other species is the primary concern.

A similar enthusiasm for computational methods to afford ‘distant or close listening’ is shared by researchers in the Digital Humanities. The fundamentally aural nature of poetry and oral history have traditionally been overlooked in humanities research, where textual sources are most common. Interviews are transcribed; the semantics of the written word is studied but the expression, intonation and rhythm voice is seldom preserved. Just as musically-meaningful features have been built from low level audio features for application in Music Information Retrieval, there is a huge potential for humanities researchers to engage with large oral and sonic archives in new ways with algorithmic methods. Responses to an exploratory workshop in the application of machine listening in Oral History that we ran at DH2016 and for arts and humanities doctoral students suggest this is a rich future research direction.

- Humanising Algorithmic Listening might mean designing new audio features and machine listening methods for use in humanities research.

Pervasive listening machines: Anxiety over always on devices

Enthusiasm over new applications of machine listening in research is balanced by social anxiety over their increasing incorporation into everyday consumer devices. Amazon’s Echo, Google’s Home, Apple’s, Siri, Microsoft’s Cortana, and Matell’s Hello Barbie are all part of an emerging range of voice-activated products that record audio and conversations from phones, wearables, and in-home agents. Recent controversy over access to the recording archive of an Amazon ECHO in a US murder trial raises questions around privacy and the Internet of Things in general, but these ‘always-on’ listening devices are seen to be particularly problematic: They are pervasive, appearing in all aspects of our lives, and able to listen in all directions; they are persistent, with no current legislation over how long records are stored for; and they process the data they collect, seeking to understand what people are saying and acting on what they are able to understand.

This insidious surveillance contributes to widespread social anxieties around automation as it impacts both labour and recreational activities. This automation anxiety is a primary research topic for colleagues in our lab, where doctoral students are also questioning the ethical implications of these always-on listening devices in every-day consumer products through practice based research.

- Humanising Algorithmic Listening might mean developing new ethical frameworks to keep corporate and commercial applications of machine listening in check.

Mattell’s controversial Hello Barbie features speech recognition and progressive learning features to create “the first fashion doll that can have two-way conversation with girls”. The girls’ conversations are stored on a remote server. It is not clear if it will also talk with boys.

Mattell’s controversial Hello Barbie features speech recognition and progressive learning features to create “the first fashion doll that can have two-way conversation with girls”. The girls’ conversations are stored on a remote server. It is not clear if it will also talk with boys.

Unpacking black boxes through listening

Part of this social anxiety stems from the unknown. There is no equivalent of an ingredients list for these algorithmically-enhanced consumer devices; commercial companies are not required to disclose their algorithms. From a public perspective, these are black boxes, the details of their operation is a Great Unknown. In some cases, the workings of bleeding edge machine listening and learning algorithms are equally evasive to the software developers that created them. For example, in the case of multi-layered neural networks used in Deep Learning, the maths is well understood, but in many cases we don’t know why a particular model is successful and we can’t predict it’s response to a particular input without actually trying it.

The extraordinary minds at Deep Mind are no doubt developing analytical understandings and make a concerted effort to communicate the science of deep learning. In a poetic turn, others are attempting to deepen our understanding of how deep learning for machine listening operates by listening to the learned features in a convolution neural network (check out Susurrant too). Through sonification, rather than formal analysis, we can gain an intuitive understanding of how each layer functions, in sonic terms. In a later blog post, network member David Kant will introduce his Happy Valley Band project which unpacks source separation and other machine listening algorithms by deconstructing the Great American Song Book and performing human recompositions of machine decompositions live.

- Humanising Algorithmic Listening might mean enriching both our formal and intuitive understandings of how extant and emerging machine listening algorithms work in sonic terms.

Understanding human-machine agency in interactive performance

A belief in the value of practice-based, creative methods in enriching our understanding of technological mediation is common to many network members. And machine listening has been extensively explored and developed in interactive music systems for decades. By imbuing computers with even simple pitch and amplitude tracking abilities we can build software instruments which we can interact with in fundamentally different ways from traditional acoustic, electronic, or even manually controlled digital instruments. This was my first introduction to machine listening. As a cello-playing PhD student I was interested in how to build software performance systems which could convincingly improvise with a human instrumentalist, the litmus test being the ability to make convincing musical responses and more ambitiously, suggestions. When performing with, rather than formally analysing software systems we gain a different, experiential, understanding of machine listening, and its role in melding human-machine agencies. This will the main focus for workshop 2 and the topic of a future blog post by Paul Stapleton.

- Humanising Algorithmic Listening might mean experientially probing human-machine agency through speculative, experimental and performative investigations.

Algorithmic listening: Utopia, dystopia or inevitable?

The range of concerns and ambitions present today, resonate with positions held throughout the history of Philosophy of Technology: utopian views of technology post-enlightenment; twentieth century dystopian warnings on the need to limit the rush of technological invention (Habermas, Hans Jonas etc.) to contemporary views that we are natural born cyborgs and inevitably intertwined with technologies of all kinds (Clarke, Latour, Haraway etc.).

Toward a well-rounded design for future hybrid ears

The aim of this network is not to reconcile these different perspectives, but to curate conversations across contemporary research communities in order that technical advances, practical ambition and creative investigation mutually inform and are nourished by critical, philosophical perspectives into how technology mediates our existence. The integration of technical, philosophical, creative and practical perspectives may be messy at first, but is necessary in order to design and manage the applications of effective, ethical and meaningful listening algorithms for the future.

Follow us on twitter to get alerts for future blog posts.

Alice Eldridge INTRODUCTIONS

info